What not.bot Doesn't Know

Most companies tell you what they know about you. We're going to tell you what we don't know about you. Because what a company doesn't collect matters more than what they promise not to share.

Most companies tell you what they know about you. We're going to tell you what we don't know about you. Because what a company doesn't collect matters more than what they promise not to share.

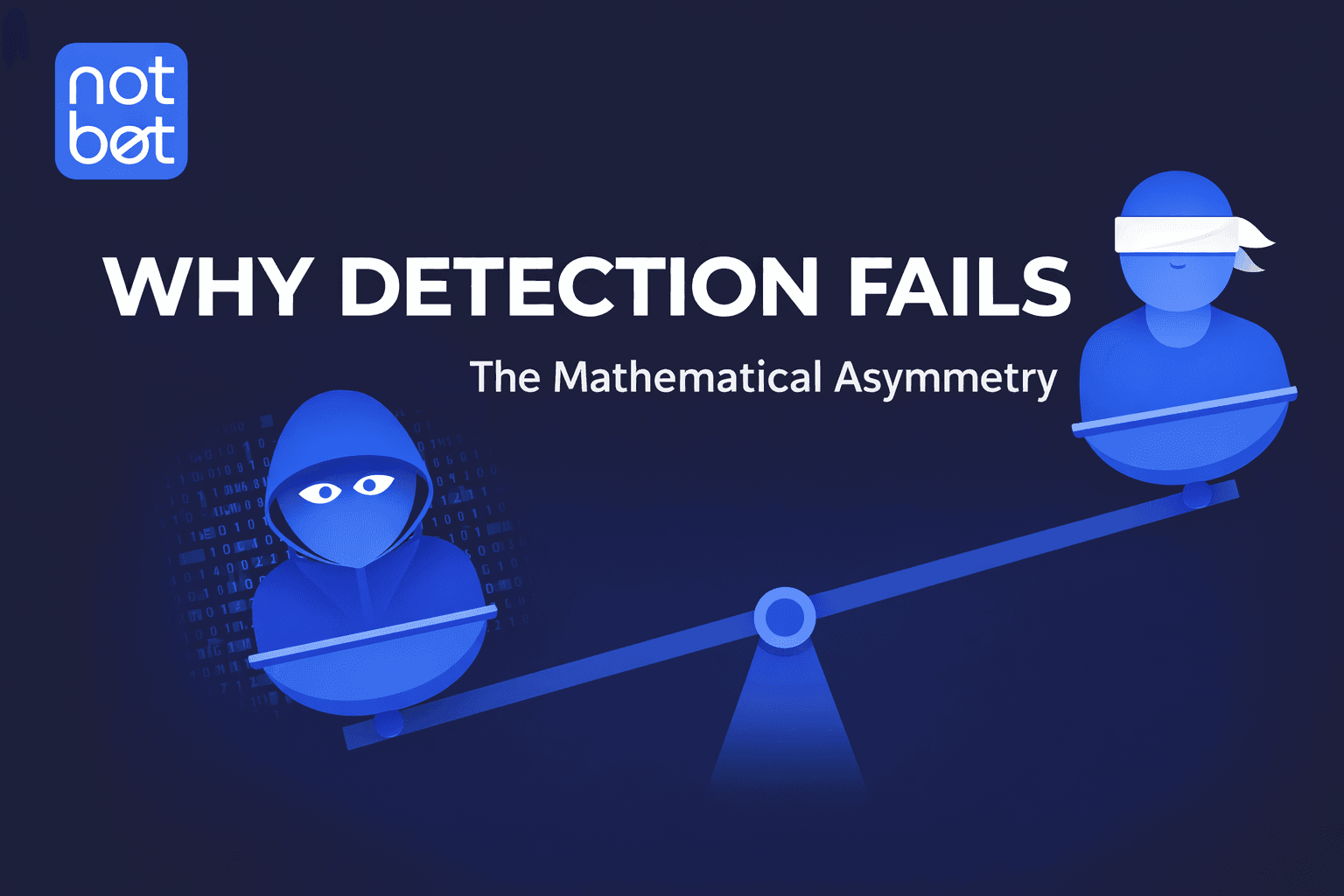

Here's a truth that most security vendors won't tell you: detection-based security is mathematically doomed. Not eventually doomed. Structurally doomed from day one.

Researchers at MIT, Stanford, Microsoft, and OpenAI have been studying Personhood Credentials—digital tokens that prove you're a real human without revealing who you are. not.bot meets essentially every requirement.

In July 2025, OpenAI's ChatGPT agent clicked through a CAPTCHA. Casually. No special prompting. No hacks. The test designed to separate humans from bots has failed. The bots won.

In March 2025, a finance director in Singapore authorized a $499,000 payment after a Zoom call with senior leadership. None of those executives were real. Every face was a deepfake.

This week, three publications dove deep into a question that's becoming impossible to ignore: How do we rebuild trust online when bots now outnumber humans?

All it takes is three seconds of audio to clone an executive's voice. Bad actors are already using AI to impersonate leadership, creating a massive financial threat that could bankrupt your company overnight.

A message to political candidates in the voice of Abraham Lincoln on the grave peril of deepfakes threatening campaigns and elections.

Do your voters actually trust you? Deepfake content is about to flood the internet, and these aren't just blurry, obviously fake videos anymore. They're sophisticated campaign weapons.

If you're a journalist, you know that trust is everything. So what happens when scammers can hijack your face, your voice, your reputation? It's already happening.

AI needs just three seconds of audio to create a perfect clone of your voice. Welcome to the copy-paste crisis threatening every content creator.

Your face is being stolen. Right now. And your fans have no idea it's happening. Scammers are using AI to create deepfakes of celebrities, putting words in their mouths and using their trusted images to sell fake products.

It's over, guys. The golden age of catfishing? Dead. Gone. Kaput. A satirical look at how not.bot is ruining the scammer business model.

What if we've been looking at the whole AI problem wrong? The real problem isn't detecting AI—it's a lack of accountability.

Our CEO Ken Griggs recently joined Ash Brown on the Ash Said It Show for a timely conversation about digital identity, privacy, and the UK's proposed nationwide digital ID system.

This week, we're joining hundreds of AI practitioners, technologists, and community leaders at AI Festivus 2025—a two-day virtual event celebrating human-centered AI.

How do you verify what's real in an age of AI? The C-SUITE EDGE invited Ken Griggs (CEO of Julia Social) to discuss digital trust and new tools to combat AI deception.

A Hong Kong firm lost $25 million after an employee authorized transfers during a video call where every participant was a deepfake.

The future of business demands ethical entrepreneurship, where transparency and trust are the new currencies of success.

In a digital landscape defined by data breaches and AI-driven uncertainty, trust has become the most valuable asset a company possesses.

Deepfakes have evolved from a novelty into a sophisticated weapon, making small businesses a prime target for AI-driven attacks and scams.

Unlike large corporations with vast security teams, small businesses are increasingly becoming the silent targets of sophisticated AI fraud.

Our digital lives feel like the Wild West, crowded with bots, deepfakes, and data harvesters. Human verification is the solution.

As a solo entrepreneur, you rely on AI tools to compete, but this dependence comes with a steep price: an escalating threat to customer privacy.